What is the Digital Services Act (DSA)?

The Digital Services Act (DSA) introduces rules for online services used by European citizens in their daily lives. The rules protect equally all EU consumers, both in regard to illegal goods, content or services, as well as their fundamental rights.

The DSA aims to build a safer and fairer online world. DSA ensures:

- transparency of content removal decisions and orders

- publicly available reports on how automated content moderation is used and its error rate

- harmonization of responses to illegal content online

- fewer dark patterns online

- ban on targeted advertising using sensitive data or data of minors

- greater transparency for users about their information flow, such as information on the parameters of recommender systems and accessible terms and conditions

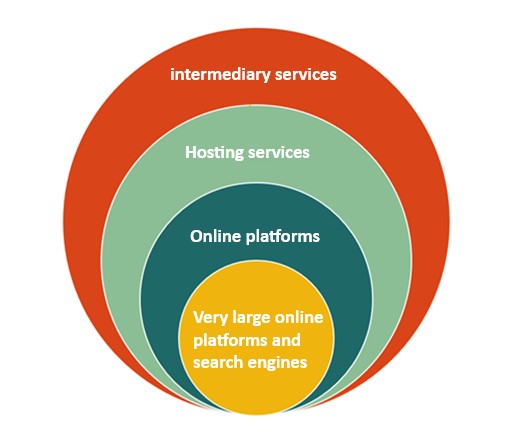

In the context of the DSA, digital services refer to intermediary services, such as hosting services, online marketplaces and social media networks.

Which providers are covered?

All online intermediaries and platforms in the EU must comply with the DSA. The DSA follows a proportionate approach, which means that the obligations assigned to the different online players match their role, size and impact in the online ecosystem.

Micro and small companies have lighter requirements based on their size, to keep them accountable while helping them scale up, while bigger platforms have more responsibilities and therefore, obligations.

The DSA recognises that the biggest online platforms – those with over 45 million monthly users in the EU – play a significant role in our societies and democracies. They must therefore follow specific rules to ensure they do not pose unintentional risks to us, such as amplifying illegal content and shaping opinion at scale, and to minimize the possibility of malicious actors using them to inflict harm on Europe.

In particular, these large platforms must identify and analyze wide-spread risks. These risks include:

- The spreading of illegal content, as defined in national or EU laws

- Threats to fundamental rights, such as freedom of expression

- Threats to media freedom and pluralism, public security and electoral processes

- Gender-based violence, public health, protection of minors and physical and mental wellbeing

How does the DSA tackle illegal content online?

The DSA requires platforms to have easy-to-use flagging mechanisms for illegal content. Platforms should process reports of illegal content in a timely manner, providing information to both the user who flag the illegal content and user who published the content on their decision and any further action.

Does the Digital Services Act define what is illegal online?

No. The new rules set out an EU-wide framework to detect, flag and remove illegal content, as well as new risk assessment obligations for very large online platforms and search engines to identify how illegal content spreads on their service.

What constitutes illegal content is defined in other laws either at EU level or at national level – for example terrorist content, child sexual abuse material, or illegal hate speech is defined at EU level. Where a content is illegal only in a given Member State, as a general rule it should only be removed in the territory where it is illegal.

What is changing in regard to content moderation?

The DSA obliges platforms to have a point of contact for users, such as email addresses, instant messages, or chatbots. Online platforms will also have to ensure that contact is quick and direct and cannot solely rely on automated tools, making it easier for users to reach platforms if they wish to make a complaint.

Secondly, online platforms must ensure that complaints are handled by qualified staff, and that the matter is handled in a timely, non-discriminatory manner. Online platforms must also provide clear and specific reasons for their moderation decisions.

Thirdly, if a user chooses to have a decision reviewed, this must be handled free of charge via a platform’s internal complaints system.

At present, the only way to settle a dispute between user and platform is through the court. Starting from 17 February 2024, after the full application of the DSA, users are entitled to an out of court dispute settlement. The cost of this should be affordable and borne by the platform, unless the out-of-court dispute settlement body finds that that recipient manifestly acted in bad faith.

How does the EU protect children on online platforms?

While the EU already has some rules to protect children online, such as those found in the Audiovisual Media Services Directive, the DSA introduces specific obligations for platforms.

Among other obligations, the DSA requires intermediary services that are primarily directed or used by minors to make efforts to ensure their terms and conditions are easily understandable by minors.

Moreover, online platforms used by minors should:

- design their interface with the highest level of privacy, security and safety for minors or participate in codes of conduct for protecting minors;

- consider the best practices and available guidance, such as the new European strategy for a better internet for kids (BIK+);

- not present advertisements to minors based on profiling;

- very large online platforms and search engines (VLOPs and VLOSEs) must make additional efforts to protect minors.

This includes making sure their risk assessment covers fundamental rights, which include the rights of the child. They should assess how easy it is for children and adolescents to understand how their service works and possible exposures to content that could impair their physical or mental wellbeing, or moral development.

How will the DSA ensure that platforms comply with the rules?

- The European Commission supervises very large online platforms and search engines; all others are the responsibility of national Digital Services Coordinators (DSCs), depending on where the company in question is based.

- Violations of DSA obligations (especially by very large platforms) can lead to significant sanctions. These range from high fines to suspension of services in Europe.

Information about the Digital Services Act and how the new rules affect consumers, businesses and online platforms can be found on the official website of the European Commission.